What is sound?

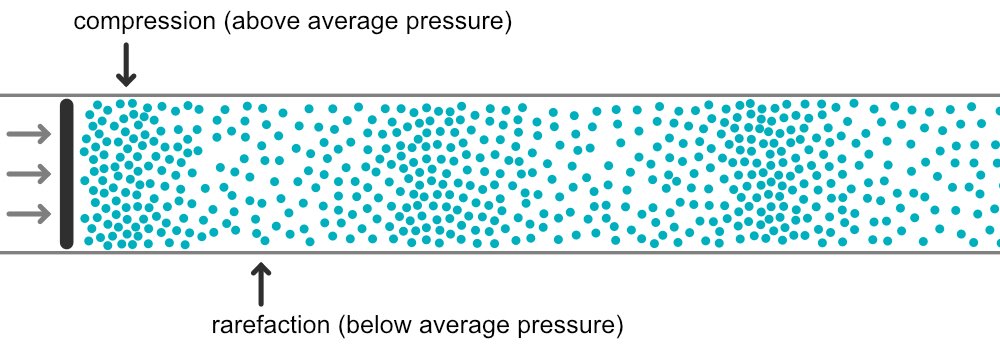

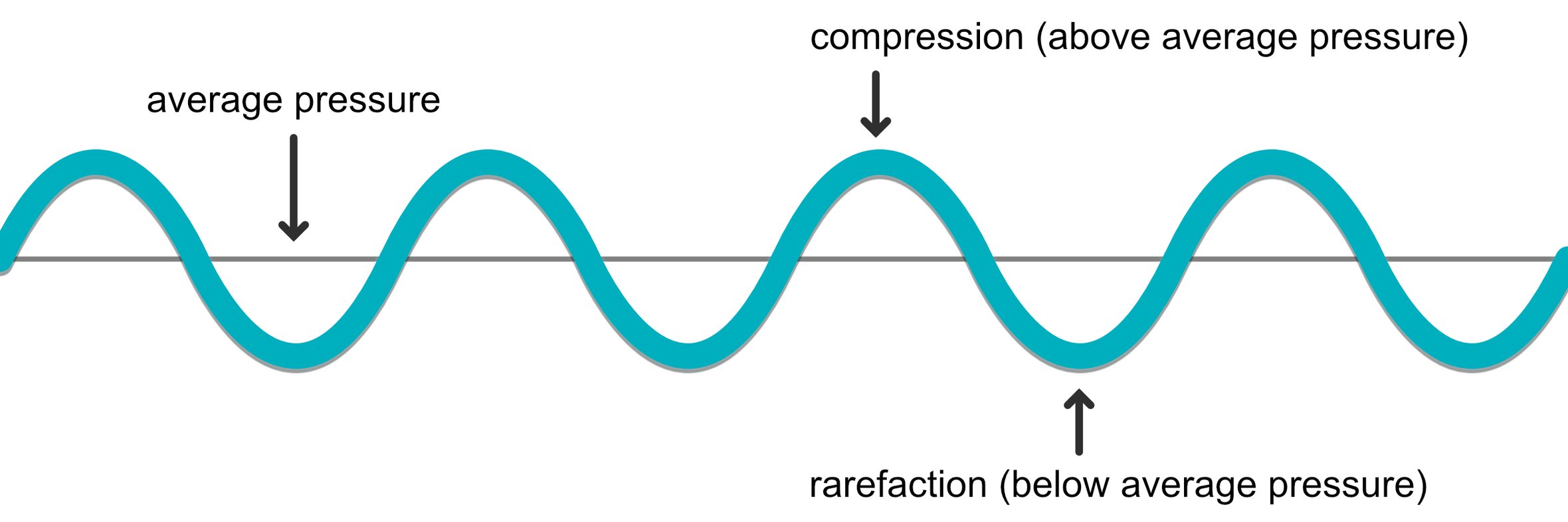

Sound starts with vibration. This could be a speaker cone moving in and out or a string on a piano being struck by a hammer. In this process, air molecules around the vibrating object are disturbed from their resting position, which starts a chain reaction. As molecules are pushed together it creates an area of higher density (also called a compression). As they are pulled apart, it creates an area of lower density (also called a rarefaction). As air particles bounce back and forth they create longitudinal waves of compression and rarefaction, transferring energy away from the vibrating object by bumping into each other. In air, this energy transfer happens parallel to the direction of the original vibration.

These small changes in the density of air particles also mean a change in air pressure. When we place a microphone at a certain location in a room, we are really just measuring these changes in air pressure. If there is no sound, it just means that the air pressure is currently static. As the pressure goes above this level (during a compression), the microphone outputs a positive voltage. As the pressure falls below this level (during a rarefaction), the microphone outputs a negative voltage.

Components of a Sound Wave

We can make some observations about how a sound wave behaves in order to measure or describe it. Some of the attributes commonly measured are frequency, amplitude, velocity and wavelength. We'll explore the concepts of phase and timbre separately in their own articles as these are a little more complex.

Frequency

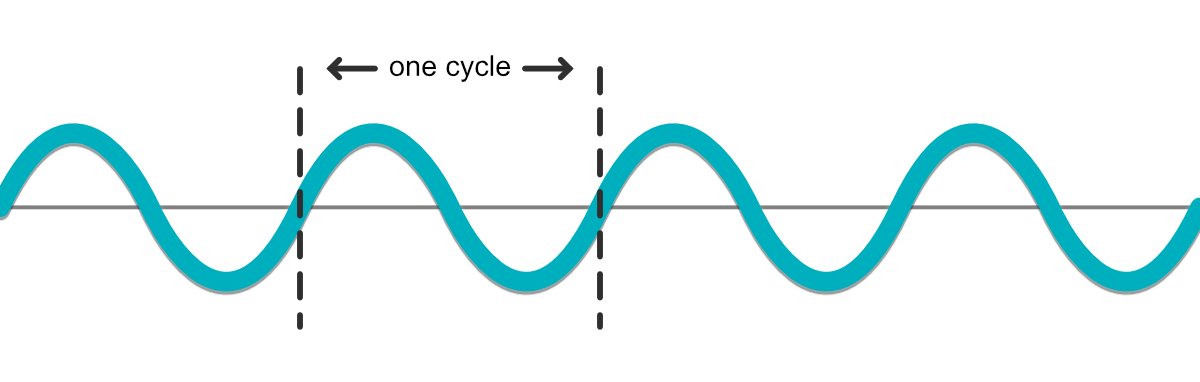

Sound waves are periodic in nature. We consider one trip through a compression and its matching rarefaction to be a single period or cycle. Frequency is defined as the number of times this cycle happens in one second. In audio we commonly use the unit Hertz or Hz (named after Heinrich Hertz), to represent the amount of times per second something occurs.

We perceive frequency as pitch, the relative highness or lowness of a sound. High frequencies vibrate faster than low frequencies, so they are represented with higher numbers and result in higher pitches. At best, humans can perceive frequencies between 20 Hz and 20,000 Hz (or 20 kHz).

You may be familiar with the frequency A440 as a tuning reference. 'A' is the pitch (in this case the A above middle C), and 440 is the frequency (440 Hz). As it turns out, a doubling of frequency also means a doubling of pitch. So, 880 Hz would be the A an octave above this one, and 220 Hz would be the A an octave below.

A doubling of frequency also means a doubling of pitch. If you're using EQ and are trying to find problematic partials, use this knowledge to quickly skip through the octaves of a particular note.

Amplitude

Amplitude is how we describe the strength of a sound wave or the amount of displacement caused by the vibration. We perceive amplitude as the loudness of a sound.

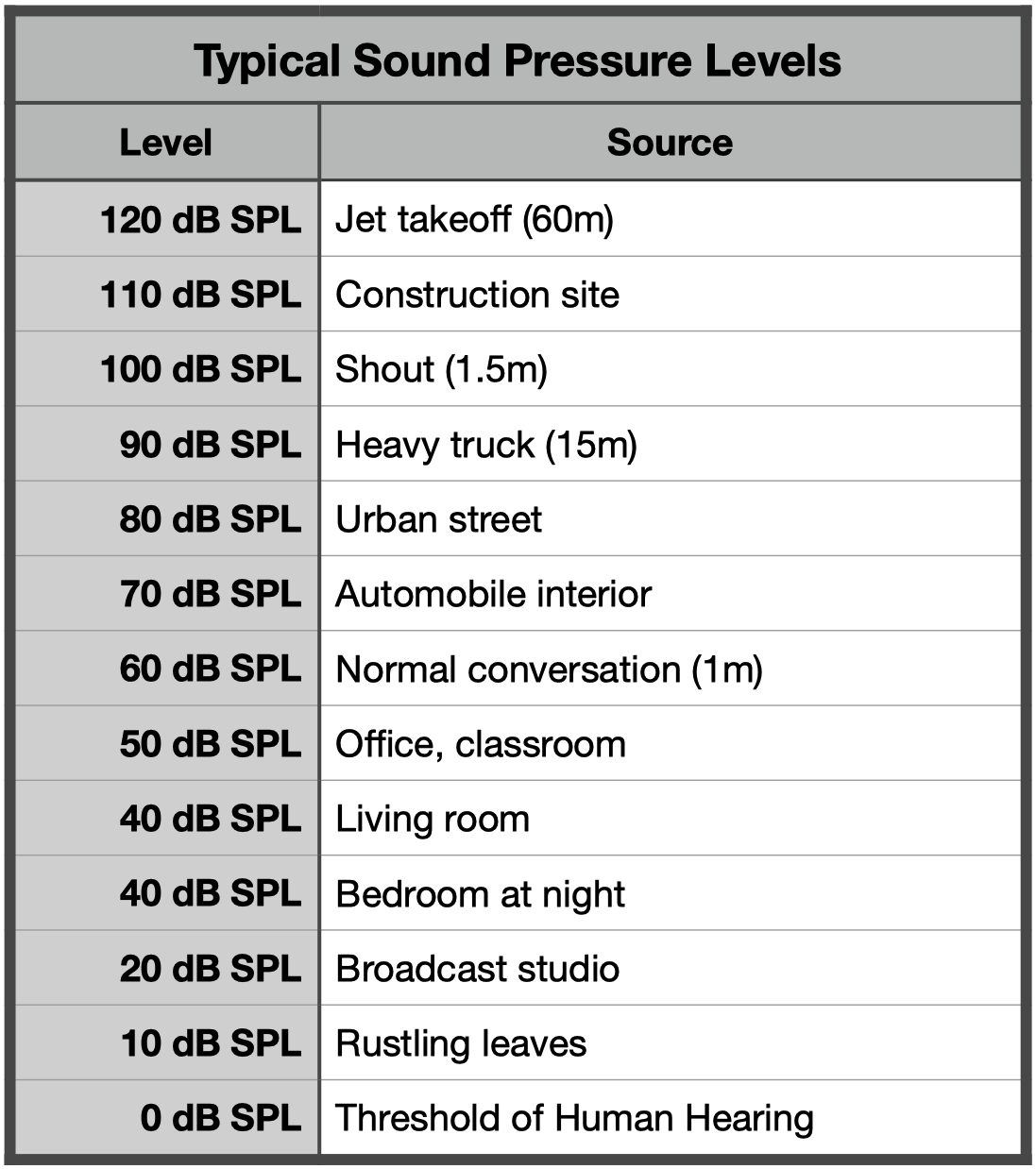

Acoustic amplitude is measured using the dB SPL scale (decibels, sound pressure level). This scale is interesting for two reasons. Firstly, it is custom designed for human perception of sound; 0 dB SPL correlates to the lower limit of human hearing. For this reason, you may occasionally see dB SPL measurements of very quiet sounds as negative numbers. The second important note about the dB SPL scale is that it is logarithmic. This means that a doubling of dB SPL is perceived as much more than twice as loud. Take a look at some of the examples on the following image for reference.

Velocity

Velocity describes the speed of the wave front. Sound moves at different speeds depending on the medium. For instance, it moves faster through water than through air and even faster through steel.

In 20°C air, sound travels at a velocity of 343 metres per second (m/s). Though this may seem like a trivial fact, in practice it is very useful because it allows us to calculate wavelength.

Wavelength

Wavelength (λ) is the distance between two identical points on adjacent cycles. Wavelength indicates the physical distance a single cycle of a wave would occupy if it were standing still. In practice, wavelength is important because of how it relates to acoustics, especially sound proofing.

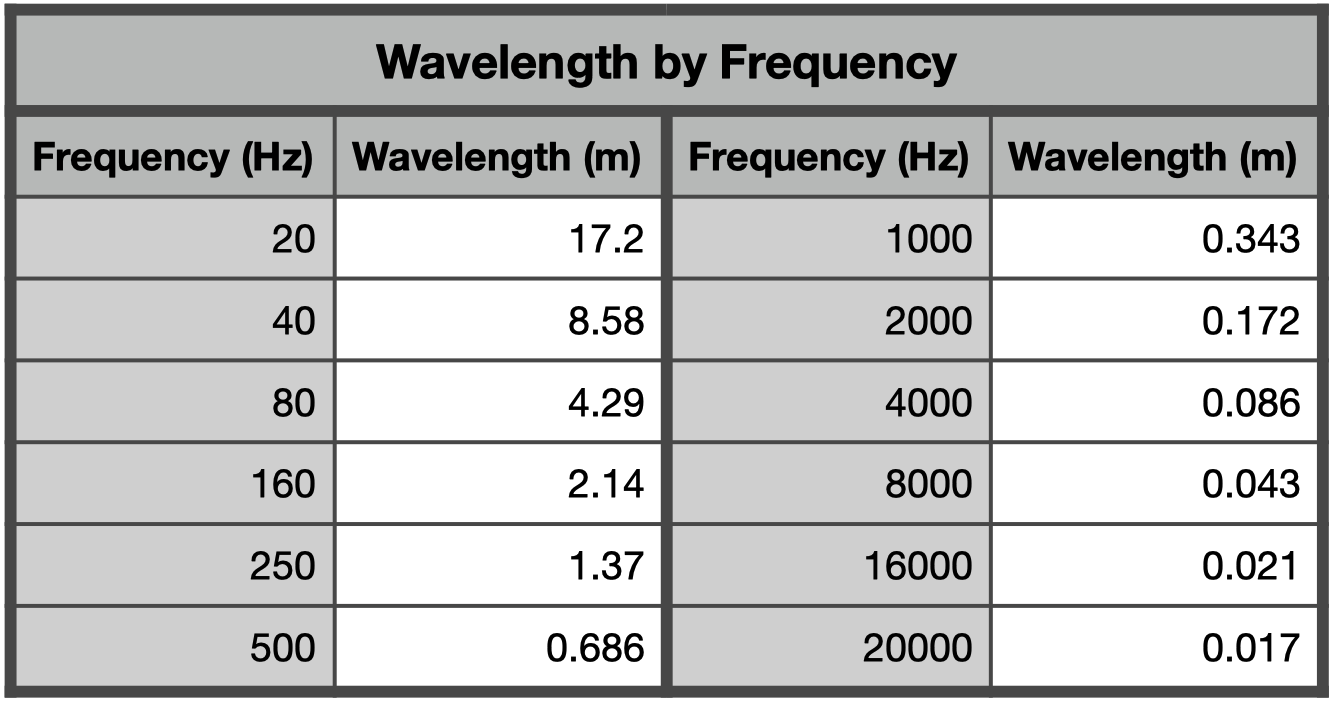

It is much easier to influence/absorb high frequencies than it is low frequencies due their wavelengths. Generally speaking, in order to absorb a sound you need to present it with a barrier that is meaningful relative to its wavelength. The tricky thing is that the range of wavelengths for the frequencies that we can hear is massive.

We can calculate wavelength by dividing velocity by frequency (λ = v/f). For example, if we want to see how long a 500 Hz sound wave is, we can use our average velocity of 343 m/s to solve for wavelength. In this case:

λ = v/f

λ = 343/500

λ = 0.686 metres

Think about this the next time you are planning acoustic treatment for your music space. You might be tempted to put some thin foam on the walls of your studio with the hopes of controlling sound. For high frequencies? Sure, no problem. For low frequencies? Forget about it.

This effect is also observable in many other day to day examples. We can hear the bass escaping from a car driving by, while the closed windows are able to block the highest frequencies. We hear the rumble of a subway even from an adjacent building, but not the high frequencies. Even placing your hand in front of your mouth while speaking damps high frequency content while the midrange frequencies aren't affected.

See the following table for some examples of wavelengths for the frequencies we can hear.